I am on the back patio, and over the fence I can hear my neighbors talking. Apparently they have massively upgraded their home security system, replacing the gravel alongside their house with LAVA! It is now very risky for the children to play along there, but apparently they are willing to take the risk. I hope they’re careful.

Tag Archives: security

An Exchange with HackerOne

In a recent episode I rambled about a system that pays good guys for finding and reporting security holes in the software we rely on every day. Fired up with enthusiasm for the cause, I sent this message to HackerOne:

I appreciate what you are doing here, and would love if there were a tip jar where I could contribute to the rewards you give out for making the world a better place. Like Zaphod, I’m just a guy, you know? But I’d happily pitch a little bit each month to promote what you do here, and to support the people who actually make the Internet less unsecure.

I debated “insecure” versus “unsecure”, and went with “un” for reasons I don’t exactly recall. Beer may have been a factor.

I got a very nice letter back.

Thank you so much for reaching out to us with this feedback on what we are doing. We appreciate you taking the time to reach out to speak with us about what you think of the program and how you would like to participate it make HackerOne a success.

You are correct about us not having a tip jar, however, our community can support us by word of mouth let others know what we do and what our goal is and if you are a hacker or know any white hat hackers we encourage you all to use our platform and help us with making the internet safer.

We really do appreciate you reaching out and I am going to share your message with the rest of the company.

Best,

Shay | HackerOne Support

The missing word and tough-to-parse sentence make me think that this was a hand-typed response. I am happy to contribute to their word-of-mouth buzz. I do not fit the profile of the geek HackerOne is looking for, and I suspect no one who will ever read these words is pondering the question “How can I break things and still be a good guy?” But if that’s you, head to HackerOne.

On the other hand, If you own a commercial Web site and want to get a major security audit, consider posting a bounty at HackerOne. You’ll get some really skilled people trying to break in, only in this case they won’t rob you blind if they get in.

Standing Rock and Internet Security

At the peak of the Standing Rock protest, a small city existed where none had before. That city relied on wireless communications to let the world know what was going on, and to coordinate the more mundane day-to-day tasks of providing for thousands of people. There is strong circumstantial evidence that our own government performed shenanigans on the communications infrastructure to not only prevent information from reaching the rest of the world, but also to hack people’s email accounts and the like.

Cracked.com, an unlikely source of “real” journalism, produced a well-written article with links to huge piles of documented facts. (This was not the only compelling article they produced.) They spent time with a team of security experts on the scene, who showed the results of one attack: When all the secure wifi hotspots in the camp were attacked, rendering them unresponsive, a new, insecure hotspot suddenly appeared. When one of the security guys connected to it, his gmail account was attacked.

Notably, a plane was flying low overhead – a very common model of Cessna, but the type known to be used by our government to be fitted with just the sort of equipment to do this sort of dirty work. The Cessna was owned by law enforcement but its flight history is secret.

What does that actually mean? It means that in a vulnerable situation, where communication depends on wireless networks, federal and state law enforcement agencies have the tools to seriously mess with you.

“But I only use secure Internet connections,” you say. “HTTPS means that people between you and the site you’re talking to can’t steal your information.” Alas, that’s not quite true. What https means is that connections to your bank or Gmail can only be monitored by someone endorsed by entities your browser has been told to trust completely. On that list: The US Government, the Chinese government, other governments, and more than a hundred privately-owned corporations. Any of those, or anyone any of those authorities chooses to endorse, or anyone who manages to hack one of those hundred-plus authorities (this has happened) can convince your browser that there is no hanky-panky going on. It shouldn’t surprise you that the NSA has a huge operation to do just that.

The NSA system wasn’t used at Standing Rock (or if it was, that effort was separate from the documented attacks above), because they don’t need airplanes loaded with exotic equipment. But those airplanes do exist, and now we have evidence that state and local law enforcement, and quite possibly private corporations as well, are willing to use them.

The moral of the story is, I guess, “don’t use unsecured WiFi”. There’s pretty much nothing you can do about the NSA. It would be nice if browsers popped up an alert like “Normally this site is vouched for by Verisign, but this time the US Government is vouching for it. Do you want to continue?” But they don’t, and I haven’t found a browser plugin that adds that capability. Which is too bad.

Edit to add: While looking for someone who perhaps had made a browser plug-in to detect these attacks, I came across this paper which described a plugin that apparently no longer exists (if it was ever released). It includes a good overview of the situation, with some thoughts that hadn’t occurred to me. It also shows pages from a brochure for a simple device that was marketed in 2009 to make it very easy for people with CA authority to eavesdrop on any SSL-protected communication. Devices so cheap they were described as “disposable”.

The Chinese are Attacking!

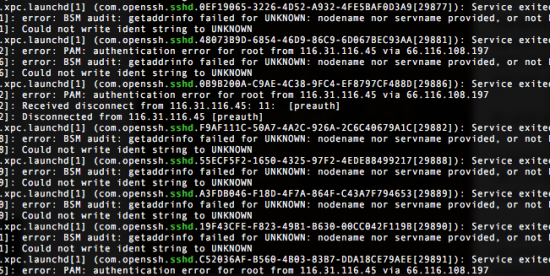

Every once in a while I check the logs of the server that hosts this blog, to see if there are any shenanigans going on. And every time I check, there ARE shenanigans. The Chinese have been slowly, patiently poking at this machine for a long, long time. The attacks will not succeed; they are trying to log in as “root”, the most powerful account on any *NIX-flavored computer, but on my server root is not allowed to log in from the outside, precisely because it is so powerful.

But the attack itself is an interesting look at the world of institutionalized hacking. It is slow, and patient, only making an attempt every thirty seconds or so. Many attack-blockers use three tries in a minute to detect monkey business; this will fly under that radar. Trying fewer than 200,000 password guesses per day limits the effectiveness of a brute-force attack, but over time (and starting with the million most common passwords), many servers will be compromised.

And in the Chinese view, they have all the time in the world. Some servers will fall to their attacks, others won’t. The ones that are compromised will likely be loaded with software that will, Manchurian-Candidate style, lie dormant until the Chinese government decides to break the Internet. And although servers like mine would provide excellent leverage, located as it is in a data center with high-speed access to the backbone, the bad guys have now discovered that home invasion provides a burgeoning opportunity as well. Consider the participation of refrigerators and thermostats in the recent attack on the Internet infrastructure on the East Coast of the United States and you begin to see the possibilities opened by a constant, patient probing of everything connected to the Internet.

I’ve been boning up on how to block the attack on my server; although in its current form the attack cannot succeed, I know I’ve been warned. The catch is I have to be very careful as I configure my safeguards — some mistakes would result in ME not being able to log in. That would be inconvenient, because if I’m unable to log in I won’t be able to fix my mistake. But like the Chinese, I can take things slowly and make sure I do it right.

Email Security 101: A Lesson Yet Unlearned

So it looks like the Russians are doing their best to help proudly racist Trump, by stealing (and perhaps altering) emails passed between members of the Democratic National Committee. It seems like the Democratic party preferred the candidate who was actually part of the party over a guy hitching his wagon to the Democrats to use that political machine as long as it was convenient to him.

But that’s not the point of this episode.

The point is this: Had the Democrats taken the time to adopt email encryption, this would not have happened. When the state department emails were hacked, the same criticism applies.

It is possible to:

- Render email unreadable by anyone but the intended recipient

- Make alteration of emails provably false

But nobody does it! Not even people protecting state secrets. I used to wonder what email breach was going to be the one that made people take email security seriously. I’m starting to think, now, that there is no breach bad enough. Even the people who try to secure email focus on the servers, when it’s the messages that can be easily hardened.

There is no privacy in email. There is no security in email. But there could be. Google could be the white hat in this scenario, but they don’t want widespread email encryption because they make money reading your email.

Currently only the bad guys encrypt their emails, because the good guys seem to be too fucking stupid.

Security Questions and Ankle-Pants

I’m that guy on Facebook, the party-pooper who, when faced with a fun quiz about personal trivia, rather than answer in kind reminds everyone that personal trivia has become a horrifyingly terrible cornerstone of personal security.

The whole concept is pure madness. Access to your most personal information (and bank account) is gated by questions about your life that may seem private, but are now entirely discoverable on the Internet — and by filling in those fun quizzes you’re helping the discovery process. Wanna guess how many of those Facebook quizzes are started by criminals? I’m going to err on the side to paranoia and say “lots”. Some are even tailored to specific bank sites and the like. Elementary school, pet’s name, first job. All that stuff is out there. Even if you don’t blab it to the world yourself, someone else will, and some innocuous question you answer about who your best friend is will lead the bad guy to that nugget.

There is nothing about you the Internet doesn’t already know. NOTHING. Security questions are simply an official invitation to steal all your stuff by people willing to do the legwork. Set up a security question with an honest answer, and you’re done for, buddy.

On the other hand, security questions become your friend if you treat them like the passwords they are. Whatever you type in as an answer should have nothing to do with the question. Otherwise, as my title suggests, you may as well drop ’em, bend over, and start whistlin’ dixie.

My computer offers me a random password generator and secure place to keep my passwords, FBI-annoying secure as long as I’m careful, but no such facility for security questions. I think there’s an opportunity there.

In the meantime, don’t ever answer a security question honestly. Where were you born? My!Father789Likes2GoFishin. Yeah? I’m from there, too! Never forget that some of those seemingly innocent questions out there on the Internet were carefully crafted to crack your personal egg. But if you never use personal facts to protect your identity, you can play along with those fun Facebook games, and not worry about first-tier evil.

An Internet Security Vulnerability that had Never Occurred to Me

Luckily for my productivity this afternoon, the Facebook page-loading feature was not working for me. I’d get two or three articles and that was it. But when it comes to wasting time, I am relentless. I decided to do a little digging and figure out why the content loader was failing. Since I spend a few hours every day debugging Web applications, I figured I could get to the bottom of things pretty quickly.

First thing to do: check the console in the debugger tools to see what sort of messages are popping up. I opened up the console, but rather than lines of informative output, I saw this:

Stop!

This is a browser feature intended for developers. If someone told you to copy-paste something here to enable a Facebook feature or “hack” someone’s account, it is a scam and will give them access to your Facebook account.

See https://www.facebook.com/selfxss for more information.

It is quite possible that most major social media sites have a warning like this, and all of them should. A huge percentage of successful “hacks” into people’s systems are more about social engineering than about actual code, and this is no exception. The console is, as the message above states, for people who know what they are doing. It allows developers to fiddle with the site they are working on, and even allows them to directly load code that the browser’s security rules would normally never allow.

These tools are built right into the browsers, and with a small effort anyone can access them. It would seem that unscrupulous individuals (aka assholes) are convincing less-sophisticated users to paste in code that compromises their Facebook accounts, perhaps just as they were hoping to hack someone else’s account.

I use the developer tools every day. I even use them on other people’s sites to track down errors or to see how they did something. Yet it never occurred to me that I could send out an important-sounding email and get people to drop their pants by using features built right into their browsers.

It’s just that sort of blindness that leads to new exploits showing up all the time, and the only cure for the blindness is to have lots of people look at features from lots of different perspectives. Once upon a time Microsoft built all sorts of automation features into Office that turned out to be a security disaster. From a business standpoint, they were great features. But no one thought, “you know, the ability to embed code that talks to your operating system directly into a Word doc is pretty much the definition of a Trojan Horse.”

So, FIRST, if anyone asks you to paste code into the developer’s console of your browser, don’t. SECOND, if you are in charge of a site that stores people’s personal data, consider a warning similar to Facebook’s. Heck, I doubt they’d complain if you straight-up copied it, link and all. THIRD, just… be skeptical. If someone wants you to do something you don’t really understand, don’t do it, no matter how important and urgent the request sounds. In fact, the more urgent the problem sounds, the more certain you can be that you are dealing with a criminal.

Back to 28: A Heck of a Security Hole in Linux

In December of 2008, some guy made a change to a program used by almost every flavor of Linux, and he (probably he, anyway), made a simple mistake. The program is called Grub2, and it’s the part that manages the user password business. For seven years it was broken.

It turns out that due to careless programming, hitting the backspace key could cause Grub2 to clear a very important chunk of memory. Normally this would cause the machine to reboot, but if you hit the backspace key exactly 28 times, it will reboot in the

In the rescue shell, one can perform all sorts of mischief on a machine, including installing spyware or just deleting everything. Yep, walk up to (almost) any Linux box, hit the backspace key 28 times, press return, and blammo. Its undies are around its ankles.

Worse, a long sequence of backspaces and characters can write all kinds of stuff into this critical memory area. Pretty much anything an attacker wants to write. Like, a little program.

Since, (as far as I know) the attacker has to have physical access to the machine to press the keys or attach a device that can send a more complex key sequence automatically, most of the world’s Linux-based infrastructure is not directly at risk — as long as the Linux machines people use to control the vast network are well-protected.

The emergency patches have been out for a couple of weeks now, so if you use Linux please make sure you apply it. The change comes down to this: If there’s nothing typed, ignore the backspace key. Magical!

You can read more about it from the guys who found it: Back to 28: Grub2 Authentication 0-Day. It’s pretty interesting reading. The article gets steadily more technical, but you can see how a seemingly-trivial oversight can escalate to dire consequences.

The lesson isn’t that Linux sucks and we should all use OpenBSD (which is all about security), but it’s important to understand that we rely on millions and millions of lines of code to keep us safe and secure. Millions and millions of lines of code, often contributed for the greater good without compensation by coders we hope are competent, and not always reviewed with the skeptical eye they deserve. Nobody ever asked “what if cur_len is less than zero?”

The infamous Heartbleed was similar. Nobody asked the critical questions.

Millions and millions of lines of code. There are more problems out there, you can bank on that.

Will the World Break in 2016?

Well, probably not. The world isn’t likely to break until 2017 at the earliest. Here’s the thing: Our economy relies on secure electronic transactions and hack-proof banks. But if you think of our current cyber security as a mighty castle made of stone, you will be rightly concerned to hear that gunpowder has arrived.

A little background: there’s a specific type of math problem that is the focus of much speculation in computer science these days. It’s a class of problem in which finding the answer is very difficult, but confirming the answer is relatively simple.

Why is this important? Because pretty much all electronic security, from credit card transactions to keeping the FBI from reading your text messages (if you use the right service) depends on it being very difficult to guess the right decoder key, but very easy to read the message if you already have the key. What keeps snoops from reading your stuff is simply that it will take hundreds of years using modern computers to figure out your decoder key.

That may come to a sudden and jarring end in the near future. You see, there’s a new kind of computer in town, and for solving very specific sorts of problems, it’s mind-bogglingly fast. It won’t be cheap, but quantum computers can probably be built in the near future specifically tuned to blow all we know about data encryption out of the water.

Google and NASA got together and made the D-Wave two, which, if you believe their hype, is the first computer that has been proven to use quantum mechanical wackiness to break through the limits imposed by those big, clunky atoms in traditional computing.

Pictures abound of the D-Wave (I stole this one from fortune.com, but the same pic is everywhere), which is a massive refrigerator with a chip in the middle. The chip has to be right down there at damn near absolute zero.

The chip inside D-Wave two was built and tuned to solve a specific problem very, very quickly. And it did. Future generations promise to be far more versatile. But it doesn’t even have to be that versatile if it is focussed on breaking 1024-bit RSA keys.

It is entirely possible that the D-Wave six will be able to bust any crypto we have working today. And let’s not pretend that this is the only quantum computer in development. It’s just the one that enjoys the light of publicity. For a moment imagine that you were building a computer that could decode any encrypted message, including passwords and authentication certificates. You’d be able to crack any computer in the world that was connected to the Internet. You probably wouldn’t mention to anyone that you were able to do that.

At Microsoft, their head security guy is all about quantum-resistant algorithms. Quantum computers are mind-boggling fast at solving certain types of math problems; security experts are scrambling to come up with encryption based on some other type of hard-to-guess, easy-to-confirm algorithm, that is intrinsically outside the realm of quantum mojo. But here’s the rub: it’s not clear that other class of math exists.

(That same Microsoft publicity piece is interesting for many other reasons, and I plan to dig into it more in the future. But to summarize: Google wins.)

So what do we do? There’s not really much we can do, except root for the banks. They have resources, they have motivation. Or, at least, let’s all hope that the banks even know there’s a problem yet, and are trying to do something about it. Because quantum computing could destroy them.

Eventually we’ll all have quantum chips in our phones to generate the encryption, and the balance of power will be restored. In the meantime, we may be beholden to the owners of these major-mojo-machines to handle our security for us. Let’s hope the people with the power to break every code on the planet use that power ethically.

Yeah, sorry. It hurts, but that may be all we have.

How Secure is Your Smoke Detector?

You probably heard about that HeartBleed thing a few months ago. Essentially, the people who build OpenSSL made a really dumb mistake and created a potentially massive security problem.

You probably heard about that HeartBleed thing a few months ago. Essentially, the people who build OpenSSL made a really dumb mistake and created a potentially massive security problem.

HeartBleed made the news, a patch came out, and all the servers and Web browsers out there were quickly updated. But what about your car?

I don’t want to be too hard on the OpenSSL guys; almost everyone uses their code and apparently (almost) no one bothers to pitch in financially to keep it secure. One of the most critical pieces of software in the world is maintained by a handful of dedicated people who don’t have the resources to keep up with the legion of evil crackers out there. (Google keeps their own version, and they pass a lot of security patches back to the OpenSSL guys. Without Google’s help, things would likely be a lot worse.)

For each HeartBleed, there are dozens of other, less-sexy exploits. SSL, the security layer that once protected your e-commerce and other private Internet communications, has been scrapped and replaced with TLS (though it is still generally referred to as SSL), and now TLS 1.0 is looking shaky. TLS 1.1 and 1.2 are still considered secure, and soon all credit card transactions will use TLS 1.2. You probably won’t notice; your browser and the rest of the infrastructure will be updated and you will carry on, confident that no one can hack into your transactions (except many governments, and about a hundred other corporations – but that’s another story).

So it’s a constant march, trying to find the holes before the bad guys do, and shoring them up. There will always be new versions of the security protocols, and for the most part the tools we use will update and we will move on with our lives.

But, I ask again, what about your car?

What version of SSL does OnStar use, especially in older cars? Could someone intercept signals between your car and the mother ship, crack the authentication, and use the “remote unlock” feature and drive away with your fancy GMC Sierra? I’ve heard stories.

You know that fancy home alarm system you have with the app that allows you to disarm it? What version of OpenSSL is installed in the receiver in your home? Can it be updated?

If your thermostat uses outdated SSL, will some punk neighbor kid download a “hijack your neighbor’s house” app and turn your thermostat up to 150? Can someone pull a password from your smoke detector system and try it on all your other stuff (another reason to only use each password once)?

Washer and dryer? The Infamous Internet Toaster? Hey! The screen on my refrigerator is showing ads for porn sites!

Everything that communicates across the Internet/Cloud/Bluetooth/whatever relies on encrypting the data to keep malicious folks away from your stuff. But many of the smaller, cheaper devices (and cars) may lack the ability to update themselves when new vulnerabilities are discovered.

I’m not saying all of these devices suck, but I would not buy any “smart” appliance until I knew exactly how they keep ahead of the bad guys. If the person selling you the car/alarm/refrigerator/whatever can’t answer that question, walk away. If they don’t care about your security and privacy, they don’t deserve your business.

I’ve been told, but I have no direct evidence to back it up, that much of the resistance in the industry to the adoption of Apple’s home automation software protocols (dubbed HomeKit) are because of the over-the-top security and privacy requirements. (Nest will not be supporting HomeKit, for instance.) In my book, for applications like this, there’s no such thing as over-the-top.

Another Stupid Security Breach

Recently, the State Department’s emails were hacked. Only the non-classified ones (that we know about), but here’s the thing:

Why the hell is the State Department not encrypting every damn email? Why does ANY agency not encrypt its emails? It’s a hassle for individuals to set up secure emails with their friends, but secure email within an institution is not that hard.

JUST DO IT, for crying out loud!

E-mail Privacy

Apparently, it is simply not possible for an American company to offer secure email. Sooner or later the United States Government is going to come knocking, and they’re not above judicial film-flams to get what they want.

Google doesn’t want your email encrypted, either. They want to read it and sell what you’ve written to advertisers.

But there’s nothing stopping you from encrypting your own email, except the inconvenience of getting your communication channels set up with your friends. Unfortunately, that’s still a PITA, especially for friends who cling to browser-based email reading.

My perfect world: every email is encrypted. There is no reliance on a central authority for the encryption. No email company or certificate authority that can be hacked or subpoenaed.

My perfect world may be a tiny bit closer to reality: Apple has announced that the next version of the Mac OS will have streamlined email encryption. S/MIME is already supported in Apple’s Mail app, but it’s not nearly as simple as it should be. If I were in charge, setting up your computer would automatically generate your own identity certificate, and every email you send would have it attached. With a single click anyone who got that email would set up a secure, encrypted email connection with you. And that would be that.

We’ll see how close Apple comes. But it gladdened my crusty old heart to see a big company at least talking about the issue.

Just say No

Google just asked to be allowed access to fairly low-level functionality on my computer. There’s a chance I might have benefitted from saying yes, but there was no explanation at all given for why the Goog should have that access. No value proposition whatsoever, just “Google wants to use the accessibility interface”. (That’s not the exact quote, which I now regret not saving.)

Honestly, I don’t even know how to prove the request came from Google. So if you get something like that, do like I did. Say no.

Building the Web of Trust – the First Baby Step

A few weeks ago I wrote about the way secure connections on the Web are set up and why the system as it stands is vulnerable to abuse — or total collapse. I’d like to spend a little time now devoted to specific things we can all do to make the Web safer for everyone. This attempt to turn the ocean liner before it hits the iceberg may well be futile, which will inevitably lead to governments being the guardians of (and privileged to) almost all our private conversations and transactions.

So, I have to try.

Security and privacy are related and a key tool for both is encryption. A piece of data is scrambled up and you need a special key (which is a huge number) to unscramble it. Modern systems use two keys, and when the data is scrambled with one, it can be unscrambled with the other.

When I get a message from Joe, I can use the public half of his pair of keys to unscramble it. If that works, then only someone who has Joe’s secret key could have sent the message. Joe has effectively “signed” the message and I can tell he wrote it and that it hasn’t been tampered with since.

The catch is, if someone gave me a bogus key and said it came from Joe, then all those messages that supposedly came from Joe actually came from someone else. These days most of the certificates (the files that contain the keys) out there are created and confirmed by a handful of companies and governments, and the software we use trusts these certificates implicitly. You are not even asked if you think Comodo is trustworthy, diligent, and none of its subsidiaries has been hacked (which happened), the decision has been made for you.

It is conceivable that we can replace this centralized authority system, but so far it’s not simple. Practically speaking, nothing big is going to change until things are much more obvious. Still, one of the first stepping-stones is in place, so we may as well get that part into common use, which would accelerate the rest of the process.

Here’s my thesis: With technology today, all emails should be signed, and any email to someone you know should also be encrypted. I look forward to the day I can reject all unsigned email, because it will be spam. As a side effect, jerssoftwarehut.com won’t get blacklisted in spam filters because some other company used that address in the “from” field of an email they sent. Email is fundamentally flawed, the big companies are too busy arguing about how to fix it, and it’s time to do it ourselves.

How do we get to this happy place? It’s actually pretty simple. It takes two steps: create a pair of keys for yourself and turn on S/MIME in your email program. If everyone did those things, the Internet world would be a much happier place. Plus, once we all have our keys, the next phase in revamping Web security, building the Web of Trust, will be much simpler (once the software manufacturers realize people actually want this — another reason we should all get our keys made).

If it’s so easy, why isn’t everyone doing it already?

All the major email programs support S/MIME, but they don’t seem to think that ordinary folks like you and me want it. All the documentation and tools are aimed at corporate IT guys and other techno-wizards.

I’m going to go through the process in general terms, then show specific steps for the operating system I know best. I borrowed from several articles which are listed at the bottom, but my process is a little different.

Step 1: Get your keys. Some of the big Certificate Authorities offer free keys, and while that route is probably easier and absolutely addresses our short-term goals, it does nothing to address the “what if the CA system breaks?” problem. So, for long-term benefits, and getting used to our new “I decide whom I trust” mentality, I recommend that we all generate our own certificates and leave the central authority out of it.

The catches in Step 1:

- It’s not obvious how one generates a key in the first place, gets it installed correctly, and copies the key to all their various devices.

- For Windows, it may depend on what software you use to read your email. Here’s an article for Mozilla (Firefox and Thunderbird): Installing an SMIME Certificate.

- For Mac, read onI’ll be publishing instructions soon.

- I’ve read (but not confirmed) that Thunderbird (the Mozilla email app) requires that certificates be signed by a CA.

- You can create your own CA – basically you just make your certificate so it says “Yeah, I’m a Certificate Authority”.

- If I know you personally, you can use my CA, which I created just yesterday for my own batch of certificates. If you’re interested let me know and I’ll tell you how. It’s pretty simple.

- When you generate your own keys, they won’t be automatically trusted by the world at large. That’s the point. The people you interact with will have to decide whether to trust your certificate. In the near term, this could be a hassle. It’s something people just haven’t had to deal with before. You can:

- Educate them, get them on board, and not worry too much if people get an “untrusted signature” message and don’t know what to do about it. That way they’ll at least notice there’s a signature at all.

For all those catches, there’s an alternative: go back to using a trusted Certificate Authority like Comodo to generate your certificate, and at least get used to signing and encrypting everything. Maybe later you can switch to a self-signed certificate.

Ironically, in the case of these free certificates from the big companies, they’re probably less trustworthy than one you generate yourself. All the CA confirms is that they sent the cert to the associated email address after they made it. But, our software trusts them for better or worse, and if that makes adoption of secure communication happen faster, then I’m good with that.

The catches in Step 2:

Really, there aren’t any. Somewhere in the preferences of your email reader you can turn on S/MIME (on Mac, installing your certificate seems to do that). You can probably set it to sign everything — and you should. The next step is to learn how to interact with the signed messages you receive. Do you trust the signature? (Don’t take this lightly – if possible confirm the email you got through another means. You only have to do this once.) If so, you can tell your computer and it will save your friend’s public key. Now you can send an encrypted message back to that person, and they’ll be able to trust your key, too. Between you two, you’ll never have to think about it again. Your communications will simply be secure, with no added effort at all.

Note:

I intended to put the step-by-step instructions for Mac here, but it’s a beautiful Sunday afternoon and even though I’m sitting outside, I have the urge to go do something besides type technical stuff into a computer, prepare a list of references, and all that stuff. So, that will have to wait a day or two. It’s time to let this episode run free!

How Stupid do you Think I Am?

So I was looking around for a Web service that could take a string of text and return an MD5 Hash of that string, and I found something disturbing.

An MD5 Hash is a big number that is generated by doing crazy math on the original information. It has two good qualities – when you start with the same text you always get the same result, and it’s pretty much impossible to tell what the text was from the number.

A lot of places store the hash of your password, rather than the password itself. When you type in your password, it’s hashed, and the resulting number is sent over the wire. If the number matches the one in their database then you’re in.

But there is one way to crack the hash I hadn’t considered: keep a database of known strings and the resulting hash. It had never occurred to me to try to keep a table so huge, but with access to this information you could pretty easily crack passwords that lots of people use.

In my search for a hashing service, I came across one such Web site. Also on that site: a service to generate a hash for you. The message: “Hey! We keep a database of hashes to render them useless! You want us to calculate a hash for you?”

Um… No thanks?

At this point, I have to advise, stay away from Web-based hash generators. I know you were about to go and use one.